Research

Research at the Uncertainty Quantification Lab covers a broad range of topics on statistical machine learning, uncertainty-aware prediction, and scientific modeling.

Our lab develops statistically principled methods for complex mathematical models, deep neural networks, and large-scale scientific data. The work spans conformal prediction, Bayesian inference, tabular foundation models, and physics-informed learning, with applications to climate science, disease forecasting, and biomedical research.

For prospective students, this page provides an overview of ongoing research directions. Topic-wise details and representative outputs are listed below in each section.

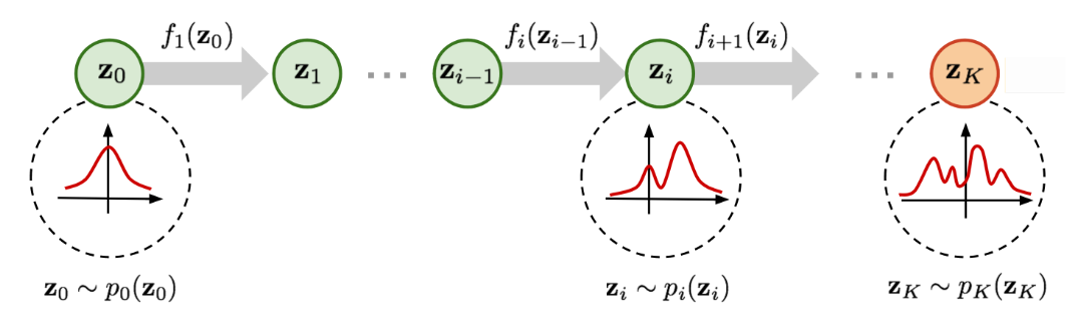

Normalizing Flows and Bayesian Inference

We develop flow-based approaches for fast posterior approximation in difficult inverse problems and scientific Bayesian computation settings.

This line of work emphasizes flexible posterior approximation and scalable Bayesian workflows for inverse problems and simulation-based scientific inference.

Recent themes

- Amortized posterior inference

- Complex posterior geometry

- Computationally efficient Bayesian workflows

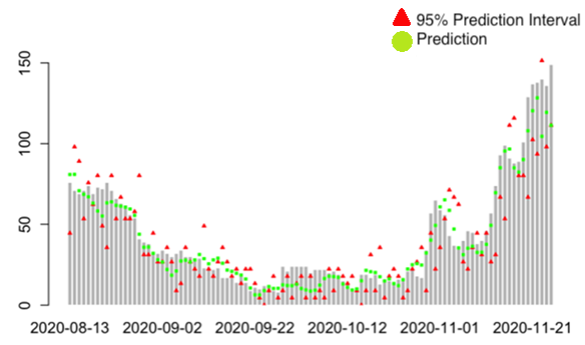

Conformal Prediction

We investigate conformal approaches for risk-controlled prediction sets and calibrated uncertainty in mathematical and machine learning models.

We develop conformal tools that provide finite-sample uncertainty guarantees and adapt them to scientific models, forecasting problems, and structured prediction tasks.

Recent themes

- Conformal risk control

- Prediction intervals for simulator outputs

- Applications to disease forecasting and computer models

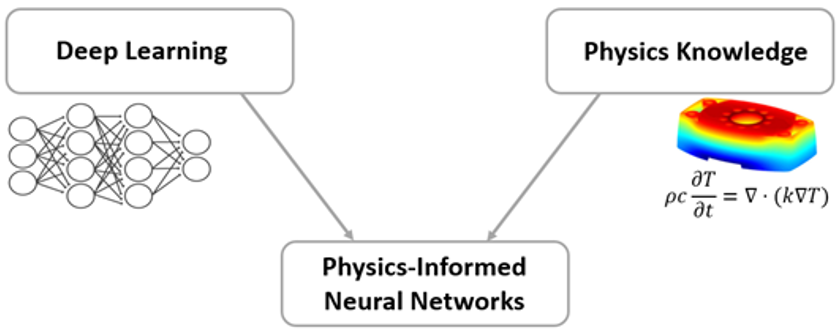

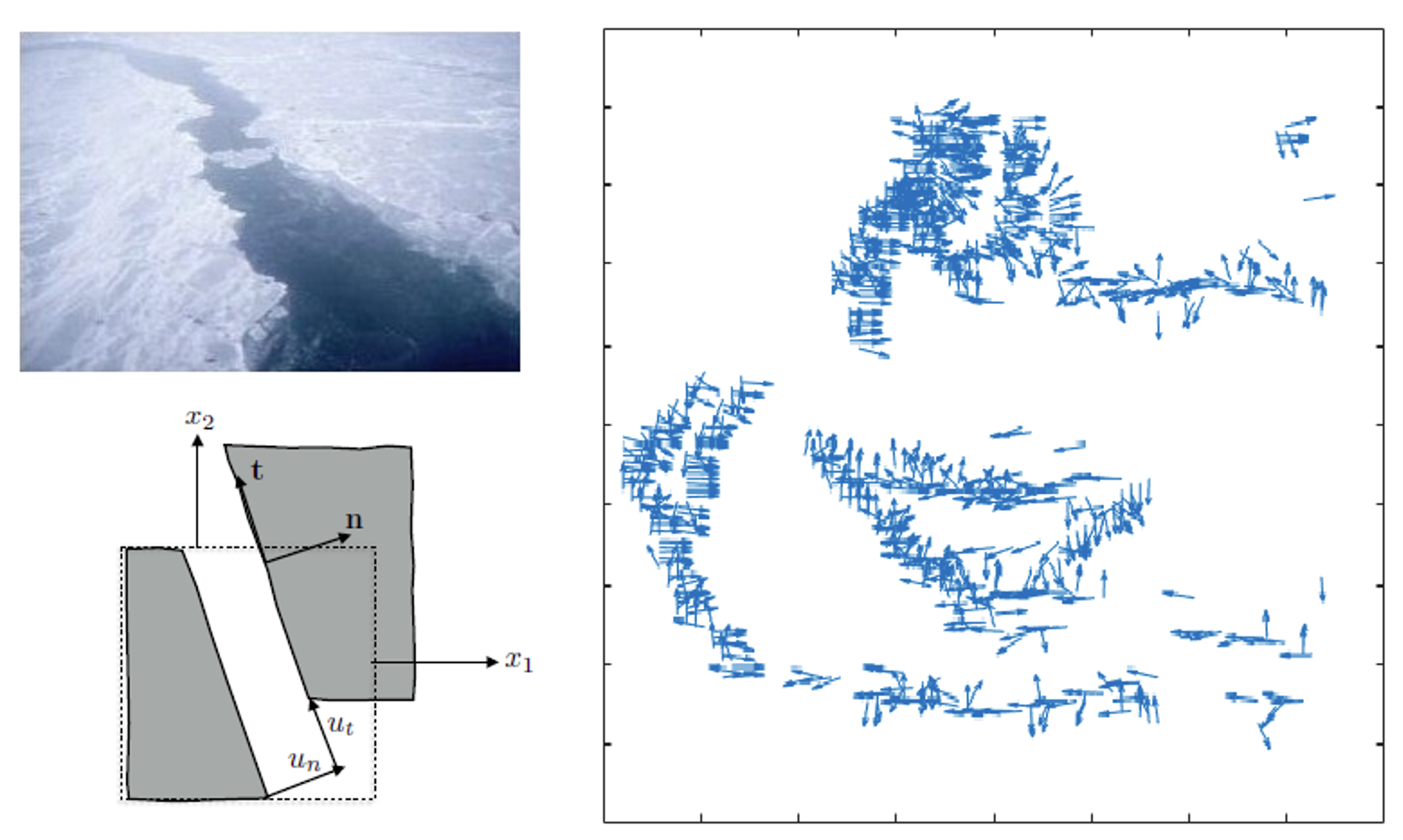

Physics-Informed Machine Learning

We combine physical knowledge and modern neural architectures to improve prediction, reconstruction, and uncertainty assessment for scientific data.

We integrate physical knowledge with machine learning models to improve reconstruction, prediction, and uncertainty quantification for complex spatio-temporal systems.

Recent themes

- Physics-Informed Neural Networks

- Diffusion-based reconstruction

- Spatio-temporal scientific data analysis

Variational Deep Neural Networks

We study variational deep learning methods for complex regression problems where inputs are structured spatial or temporal observations from scientific systems.

We focus on statistically grounded deep learning methods for structured scientific data, especially when reliable uncertainty estimates matter as much as raw predictive accuracy.

Recent themes

- Statistical learning with high-dimensional scientific inputs

- Uncertainty-aware prediction for structured covariates

- Applications to climate and cosmology

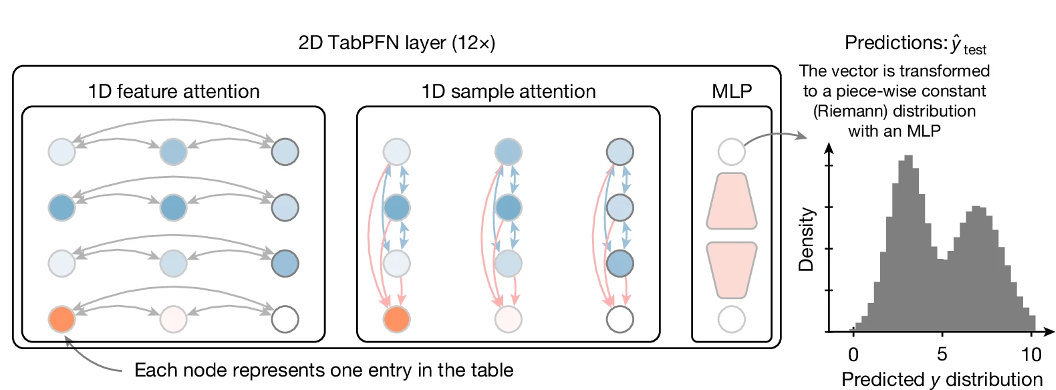

Tabular Foundation Models

We analyze and improve tabular foundation models for reliable prediction, calibration, and uncertainty quantification in domains such as healthcare, finance, and environmental science.

Our work studies how modern tabular foundation models behave under distribution shift, calibration pressure, and real decision-making settings where trustworthy deployment is essential.

Recent themes

- Reliable extrapolation and calibration

- Uncertainty analysis of Transformer-based tabular models

- Benchmarks for trustworthy deployment